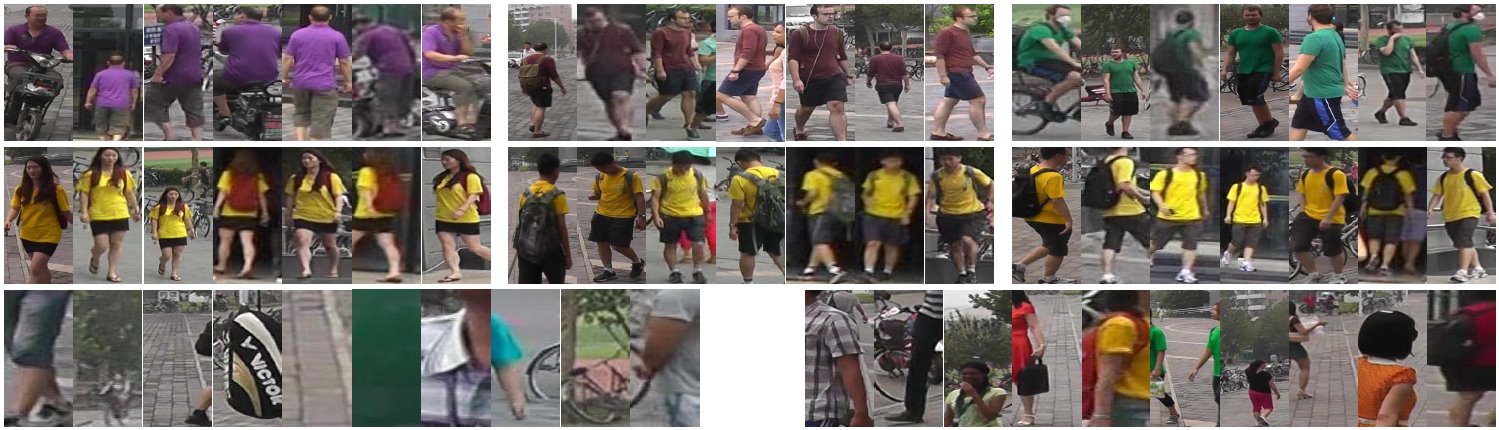

Person re-identification, also known as person retrieval, is to match pedestrian images observed from non-overlapping camera views based on appearance.It receives increasing attentions in video surveillance for its important applications in threat detection, human retrieval, and multi-camera tracking. It saves a lot of human labor in exhaustively searching for a person of interest from large amounts of video sequences.Last Updated: Apr 26, 2019

Table of Contents

generated with DocToc

Leaderboard

| Method | backbone | test size | Market1501 | CUHK03 (detected) | CUHK03 (detected/new) | CUHK03 (labeled/new) | CUHK-SYSU | DukeMTMC-reID | MARS |

|---|

| | | rank1 / mAP | rank1/ 5 / 10 | rank1 / mAP | rank1 / mAP | rank1 / mAP | | rank1 / mAP |

| AlignedReID | ResNet50-X | | 92.6 / 82.3 | 91.9 / 98.7 / 99.4 | | 86.8 / 79.1 | | | 95.3 / 93.7 |

| AlignedReID (RK) | | | 94.0 / 91.2 | 96.1 / 99.5 / 99.6 | | 87.5 / 85.6 | | | |

| Deep-Person(SQ) | ResNet-50 | 256×128 | 92.31 / 79.58 | 89.4 / 98.2 / 99.1 | | | | | 80.90 / 64.80 |

| Deep-Person(MQ) | ResNet-50 | 256×128 | 94.48 / 85.09 | | | | | | |

| PCB(SQ) | ResNet-50 | 384x128 | 92.4 / 77.3 | | 61.3 / 54.2 | | | 81.9 / 65.3 | |

| PCB+RPP(SQ) | ResNet-50 | 384x128 | 93.8 / 81.6 | | 63.7 / 57.5 | | | 83.3 / 69.2 | |

| PN-GAN (SQ) | ResNet-50 | | 89.43 / 72.58 | 79.76/ 96.24/ 98.56 | | | | | 73.58 / 53.20 |

| PN-GAN (MQ) | ResNet-50 | | 95.90 / 91.37 | | | | | | |

| MGN (SQ) | ResNet-50 | | 95.7 / 86.9 | | 66.8 / 66.0 | 68.0 / 67.4 | | 88.7 / 78.4 | |

| MGN (MQ) | ResNet-50 | | 96.9 / 90.7 | | | | | | |

| MGN (SQ+RK) | ResNet-50 | | 96.6 / 94.2 | | | | | | |

| MGN (MQ+RK) | ResNet-50 | | 97.1 / 95.9 | | | | | | |

| HPM(SQ) | ResNet-50 | 384x128 | 94.2 / 82.7 | | 63.1 / 57.5 | | | 86.6 / 74.3 | |

| HPM+HRE(SQ) | ResNet-50 | 384x128 | 93.9 / 83.1 | | 63.2 / 59.7 | | | 86.3 / 74.5 | - |

| SphereReID | ResNet-50 | 288×144 | 94.4 / 83.6 | 93.1 / 98.7 / 99.4 | 63.2 / 59.7 | | 95.4 / 93.9 | 83.9 / 68.5 | - |

| Auto-ReID | | 384x128 | 94.5 / 85.1 | | 73.3 / 69.3 | 77.9 / 73.0 | | 88.5 / 75.1 | - |

Person Re-identification / Person Retrieval

DeepReID: Deep Filter Pairing Neural Network for Person Re-Identification

intro: CVPR 2014

paper: http://www.cv-foundation.org/openaccess/content_cvpr_2014/papers/Li_DeepReID_Deep_Filter_2014_CVPR_paper.pdf

An Improved Deep Learning Architecture for Person Re-Identification

intro: CVPR 2015

paper: http://www.cv-foundation.org/openaccess/content_cvpr_2015/papers/Ahmed_An_Improved_Deep_2015_CVPR_paper.pdf

github: https://github.com/Ning-Ding/Implementation-CVPR2015-CNN-for-ReID

Deep Ranking for Person Re-identification via Joint Representation Learning

intro: IEEE Transactions on Image Processing (TIP), 2016

arxiv: https://arxiv.org/abs/1505.06821

PersonNet: Person Re-identification with Deep Convolutional Neural Networks

arxiv: http://arxiv.org/abs/1601.07255

Learning Deep Feature Representations with Domain Guided Dropout for Person Re-identification

intro: CVPR 2016

arxiv: https://arxiv.org/abs/1604.07528

github: https://github.com/Cysu/dgd_person_reid

Person Re-Identification by Multi-Channel Parts-Based CNN with Improved Triplet Loss Function

intro: CVPR 2016

paper: http://www.cv-foundation.org/openaccess/content_cvpr_2016/papers/Cheng_Person_Re-Identification_by_CVPR_2016_paper.pdf

Joint Learning of Single-image and Cross-image Representations for Person Re-identification

intro: CVPR 2016

paper: http://openaccess.thecvf.com/content_cvpr_2016/papers/Wang_Joint_Learning_of_CVPR_2016_paper.pdf

End-to-End Comparative Attention Networks for Person Re-identification

paper: https://arxiv.org/abs/1606.04404

A Multi-task Deep Network for Person Re-identification

intro: AAAI 2017

arxiv: http://arxiv.org/abs/1607.05369

A Siamese Long Short-Term Memory Architecture for Human Re-Identification

arxiv: http://arxiv.org/abs/1607.08381

Gated Siamese Convolutional Neural Network Architecture for Human Re-Identification

intro: ECCV 2016

keywords: Market1501 rank1 = 65.9%

arxiv: https://arxiv.org/abs/1607.08378

Deep Neural Networks with Inexact Matching for Person Re-Identification

intro: NIPS 2016

keywords: Normalized correlation layer, CUHK03/CUHK01/QMULGRID

paper: https://papers.nips.cc/paper/6367-deep-neural-networks-with-inexact-matching-for-person-re-identification

github: https://github.com/InnovArul/personreid_normxcorr

Person Re-identification: Past, Present and Future

paper: https://arxiv.org/abs/1610.02984

note: https://blog.csdn.net/zdh2010xyz/article/details/53741682

Deep Learning Prototype Domains for Person Re-Identification

arxiv: https://arxiv.org/abs/1610.05047

Deep Transfer Learning for Person Re-identification

arxiv: https://arxiv.org/abs/1611.05244

note: https://blog.csdn.net/shenxiaolu1984/article/details/53607268

A Discriminatively Learned CNN Embedding for Person Re-identification

intro: TOMM 2017

arxiv: https://arxiv.org/abs/1611.05666

github(official, MatConvnet): https://github.com/layumi/2016_person_re-ID

github: https://github.com/D-X-Y/caffe-reid

Person Re-Identification via Recurrent Feature Aggregation

intro: ECCV 2016

keywords: recurrent feature aggregation network (RFA-Net)

arxiv: https://arxiv.org/abs/1701.06351

code: https://sites.google.com/site/yanyichao91sjtu/

github(official): https://github.com/daodaofr/caffe-re-id

Structured Deep Hashing with Convolutional Neural Networks for Fast Person Re-identification

arxiv: https://arxiv.org/abs/1702.04179

SVDNet for Pedestrian Retrieval

intro: ICCV 2017 spotlight

intro: On the Market-1501 dataset, rank-1 accuracy is improved from 55.2% to 80.5% for CaffeNet,

and from 73.8% to 83.1% for ResNet-50

arxiv: https://arxiv.org/abs/1703.05693

github: https://github.com/syfafterzy/SVDNet-for-Pedestrian-Retrieval

In Defense of the Triplet Loss for Person Re-Identification

arxiv: https://arxiv.org/abs/1703.07737

github(Theano): https://github.com/VisualComputingInstitute/triplet-reid

Beyond triplet loss: a deep quadruplet network for person re-identification

intro: CVPR 2017

arxiv: https://arxiv.org/abs/1704.01719

ppaper: http://cvip.computing.dundee.ac.uk/papers/Chen_CVPR_2017_paper.pdf

Quality Aware Network for Set to Set Recognition

intro: CVPR 2017

arxiv: https://arxiv.org/abs/1704.03373

github: https://github.com/sciencefans/Quality-Aware-Network

Learning Deep Context-aware Features over Body and Latent Parts for Person Re-identification

intro: CVPR 2017. CASIA

keywords: Multi-Scale Context-Aware Network (MSCAN)

arxiv: https://arxiv.org/abs/1710.06555

supplemental: Li_Learning_Deep_Context-Aware_2017_CVPR_supplemental.pdf

Point to Set Similarity Based Deep Feature Learning for Person Re-identification

intro: CVPR 2017

paper: http://openaccess.thecvf.com/content_cvpr_2017/papers/Zhou_Point_to_Set_CVPR_2017_paper.pdf

github(stay tuned): https://github.com/samaonline/Point-to-Set-Similarity-Based-Deep-Feature-Learning-for-Person-Re-identification

Scalable Person Re-identification on Supervised Smoothed Manifold

intro: CVPR 2017 spotlight

arxiv: https://arxiv.org/abs/1703.08359

youtube: https://www.youtube.com/watch?v=bESdJgalQrg

Attention-based Natural Language Person Retrieval

intro: CVPR 2017 Workshop (vision meets cognition)

keywords: Bidirectional Long Short Term Memory (BLSTM)

arxiv: https://arxiv.org/abs/1705.08923

Part-based Deep Hashing for Large-scale Person Re-identification

intro: IEEE Transactions on Image Processing, 2017

arxiv: https://arxiv.org/abs/1705.02145

Deep Person Re-Identification with Improved Embedding and Efficient Training

intro: IJCB 2017

arxiv: https://arxiv.org/abs/1705.03332

Towards a Principled Integration of Multi-Camera Re-Identification and Tracking through Optimal Bayes Filters

arxiv: https://arxiv.org/abs/1705.04608

github: https://github.com/VisualComputingInstitute/towards-reid-tracking

Person Re-Identification by Deep Joint Learning of Multi-Loss Classification

intro: IJCAI 2017

arxiv: https://arxiv.org/abs/1705.04724

Deep Representation Learning with Part Loss for Person Re-Identification

keywords: Part Loss Networks

arxiv: https://arxiv.org/abs/1707.00798

Pedestrian Alignment Network for Large-scale Person Re-identification

arxiv: https://arxiv.org/abs/1707.00408

github: https://github.com/layumi/Pedestrian_Alignment

Learning Efficient Image Representation for Person Re-Identification

arxiv: https://arxiv.org/abs/1707.02319

Person Re-identification Using Visual Attention

intro: ICIP 2017

arxiv: https://arxiv.org/abs/1707.07336

What-and-Where to Match: Deep Spatially Multiplicative Integration Networks for Person Re-identification

arxiv: https://arxiv.org/abs/1707.07074

Deep Feature Learning via Structured Graph Laplacian Embedding for Person Re-Identification

arxiv: https://arxiv.org/abs/1707.07791

Large Margin Learning in Set to Set Similarity Comparison for Person Re-identification

intro: IEEE Transactions on Multimedia

arxiv: https://arxiv.org/abs/1708.05512

Multi-scale Deep Learning Architectures for Person Re-identification

intro: ICCV 2017

arxiv: https://arxiv.org/abs/1709.05165

Person Re-Identification by Deep Learning Multi-Scale Representations

intro: ICCV 2017

keywords: Deep Pyramid Feature Learning (DPFL)

paper: Chen_Person_Re-Identification_by_ICCV_2017_paper.pdf

paper: http://www.eecs.qmul.ac.uk/~sgg/papers/ChenEtAl_ICCV2017WK_CHI.pdf

HydraPlus-Net: Attentive Deep Features for Pedestrian Analysis

intro: ICCV 2017. CUHK & SenseTime,

arxiv: https://arxiv.org/abs/1709.09930

github: https://github.com/xh-liu/HydraPlus-Net

Person Re-Identification with Vision and Language

arxiv: https://arxiv.org/abs/1710.01202

Margin Sample Mining Loss: A Deep Learning Based Method for Person Re-identification

arxiv: https://arxiv.org/abs/1710.00478

Pseudo-positive regularization for deep person re-identification

arxiv: https://arxiv.org/abs/1711.06500

Let Features Decide for Themselves: Feature Mask Network for Person Re-identification

keywords: Feature Mask Network (FMN)

arxiv: https://arxiv.org/abs/1711.07155

AlignedReID: Surpassing Human-Level Performance in Person Re-Identification

intro: Megvii Inc & Zhejiang University

arxiv: https://arxiv.org/abs/1711.08184

evaluation website: (Market1501): http://reid-challenge.megvii.com/

evaluation website: (CUHK03): http://reid-challenge.megvii.com/cuhk03

github: https://github.com/huanghoujing/AlignedReID-Re-Production-Pytorch

Region-based Quality Estimation Network for Large-scale Person Re-identification

intro: AAAI 2018

arxiv: https://arxiv.org/abs/1711.08766

Beyond Part Models: Person Retrieval with Refined Part Pooling

keywords: Part-based Convolutional Baseline (PCB), Refined Part Pooling (RPP)

arxiv: https://arxiv.org/abs/1711.09349

Deep-Person: Learning Discriminative Deep Features for Person Re-Identification

arxiv: https://arxiv.org/abs/1711.10658

Hierarchical Cross Network for Person Re-identification

arxiv: https://arxiv.org/abs/1712.06820

Re-ID done right: towards good practices for person re-identification

arxiv: https://arxiv.org/abs/1801.05339

Triplet-based Deep Similarity Learning for Person Re-Identification

intro: ICCV Workshops 2017

arxiv: https://arxiv.org/abs/1802.03254

Group Consistent Similarity Learning via Deep CRFs for Person Re-Identification

intro: CVPR 2018 oral

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Chen_Group_Consistent_Similarity_CVPR_2018_paper.pdf

Image-Image Domain Adaptation with Preserved Self-Similarity and Domain-Dissimilarity for Person Re-identification

intro: CVPR 2018

keywords: similarity preserving generative adversarial network (SPGAN), Siamese network, CycleGAN, domain adaptation

arxiv: https://arxiv.org/abs/1711.07027

Harmonious Attention Network for Person Re-Identification

intro: CVPR 2018

keywords: Harmonious Attention CNN (HA-CNN)

arxiv: https://arxiv.org/abs/1802.08122

Camera Style Adaptation for Person Re-identfication

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1711.10295

github: https://github.com/zhunzhong07/CamStyle

Image-Image Domain Adaptation with Preserved Self-Similarity and Domain-Dissimilarity for Person Re-identification

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1711.07027

Dual Attention Matching Network for Context-Aware Feature Sequence based Person Re-Identification

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1803.09937

Multi-Level Factorisation Net for Person Re-Identification

intro: CVPR 2018

keywords: Multi-Level Factorisation Net (MLFN)

arxiv: https://arxiv.org/abs/1803.09132

Features for Multi-Target Multi-Camera Tracking and Re-Identification

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1803.10859

Good Appearance Features for Multi-Target Multi-Camera Tracking

intro: CVPR 2018 spotlight. Duke University

keywords: adaptive weighted triplet loss, hard-identity mining

project page: http://vision.cs.duke.edu/DukeMTMC/

arxiv: https://arxiv.org/abs/1803.10859

Mask-guided Contrastive Attention Model for Person Re-Identification

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Song_Mask-Guided_Contrastive_Attention_CVPR_2018_paper.pdf

Efficient and Deep Person Re-Identification using Multi-Level Similarity

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1803.11353

Person Re-identification with Cascaded Pairwise Convolutions

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Wang_Person_Re-Identification_With_CVPR_2018_paper.pdf

Attention-Aware Compositional Network for Person Re-identification

intro: CVPR 2018

intro: Sensets Technology Limited & University of Sydney

keywords: Attention-Aware Compositional Network (AACN), Pose-guided Part Attention (PPA), Attention-aware Feature Composition (AFC)

arxiv: https://arxiv.org/abs/1805.03344

Deep Group-shuffling Random Walk for Person Re-identification

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Shen_Deep_Group-Shuffling_Random_CVPR_2018_paper.pdf

Adversarially Occluded Samples for Person Re-identification

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Huang_Adversarially_Occluded_Samples_CVPR_2018_paper.pdf

Easy Identification from Better Constraints: Multi-Shot Person Re-Identification from Reference Constraints

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Zhou_Easy_Identification_From_CVPR_2018_paper.pdf

Eliminating Background-bias for Robust Person Re-identification

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Tian_Eliminating_Background-Bias_for_CVPR_2018_paper.pdf

End-to-End Deep Kronecker-Product Matching for Person Re-identification

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Shen_End-to-End_Deep_Kronecker-Product_CVPR_2018_paper.pdf

Exploiting Transitivity for Learning Person Re-identification Models on a Budget

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Roy_Exploiting_Transitivity_for_CVPR_2018_paper.pdf

Resource Aware Person Re-identification across Multiple Resolutions

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Wang_Resource_Aware_Person_CVPR_2018_paper.pdf

Multi-Channel Pyramid Person Matching Network for Person Re-Identification

intro: 32nd AAAI Conference on Artificial Intelligence

keywords: Multi-Channel deep convolutional Pyramid Person Matching Network (MC-PPMN)

arxiv: https://arxiv.org/abs/1803.02558

Pyramid Person Matching Network for Person Re-identification

intro: 9th Asian Conference on Machine Learning (ACML2017) JMLR Workshop and Conference Proceedings

arxiv: https://arxiv.org/abs/1803.02547

Virtual CNN Branching: Efficient Feature Ensemble for Person Re-Identification

arxiv: https://arxiv.org/abs/1803.05872

Adversarial Binary Coding for Efficient Person Re-identification

arxiv: https://arxiv.org/abs/1803.10914

Learning View-Specific Deep Networks for Person Re-Identification

intro: IEEE Transactions on image processing. Sun Yat-Sen University

keywords: cross-view Euclidean constraint (CV-EC), cross-view center loss (CV-CL)

arxiv: https://arxiv.org/abs/1803.11333

Learning Discriminative Features with Multiple Granularities for Person Re-Identification

intro: Shanghai Jiao Tong University & CloudWalk

keywords: Multiple Granularity Network (MGN)

arxiv: https://arxiv.org/abs/1804.01438

Recurrent Neural Networks for Person Re-identification Revisited

intro: Stanford University & Google AI

arxiv: https://arxiv.org/abs/1804.03281

MaskReID: A Mask Based Deep Ranking Neural Network for Person Re-identification

arxiv: https://arxiv.org/abs/1804.03864

Horizontal Pyramid Matching for Person Re-identification

intro: AAAI 2019

intro: UIUC & IBM Research & Cornell University & Stevens Institute of Technology &CloudWalk Technology

keywords: Horizontal Pyramid Matching (HPM), Horizontal Pyramid Pooling (HPP), horizontal random erasing (HRE)

arxiv: https://arxiv.org/abs/1804.05275

github: https://github.com/OasisYang/HPM

Part-Aligned Bilinear Representations for Person Re-identification

intro: Seoul National University & Microsoft Research & Max Planck Institute & University of Tubingen & JD.COM

arxiv: https://arxiv.org/abs/1804.07094

Deep Co-attention based Comparators For Relative Representation Learning in Person Re-identification

arxiv: https://arxiv.org/abs/1804.11027

Feature Affinity based Pseudo Labeling for Semi-supervised Person Re-identification

arxiv: https://arxiv.org/abs/1805.06118

Resource Aware Person Re-identification across Multiple Resolutions

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1805.08805

Semantically Selective Augmentation for Deep Compact Person Re-Identification

arxiv: https://arxiv.org/abs/1806.04074

SphereReID: Deep Hypersphere Manifold Embedding for Person Re-Identification

intro: it achieves 94.4% rank-1 accuracy on Market-1501 and 83.9% rank-1 accuracy on DukeMTMC-reID

arxiv: https://arxiv.org/abs/1807.00537

Multi-task Mid-level Feature Alignment Network for Unsupervised Cross-Dataset Person Re-Identification

intro: BMVC 2018. University of Warwick & Nanyang Technological University & Charles Sturt University

arxiv: https://arxiv.org/abs/1807.01440

Discriminative Feature Learning with Foreground Attention for Person Re-Identification

arxiv: https://arxiv.org/abs/1807.01455

Part-Aligned Bilinear Representations for Person Re-identification

intro: ECCV 2018

intro: Seoul National University & Microsoft Research & Max Planck Institute & University of Tubingen & JD.COM

arxiv: https://arxiv.org/abs/1804.07094

github: https://github.com/yuminsuh/part_bilinear_reid

Mancs: A Multi-task Attentional Network with Curriculum Sampling for Person Re-identification

intro: ECCV 2018. Huazhong University of Science and Technology & Horizon Robotics Inc.

Improving Deep Visual Representation for Person Re-identification by Global and Local Image-language Association

intro: ECCV 2018

arxiv: https://arxiv.org/abs/1808.01571

Deep Sequential Multi-camera Feature Fusion for Person Re-identification

arxiv: https://arxiv.org/abs/1807.07295

Improving Deep Models of Person Re-identification for Cross-Dataset Usage

intro: AIAI 2018 (14th International Conference on Artificial Intelligence Applications and Innovations) proceeding

arxiv: https://arxiv.org/abs/1807.08526

Measuring the Temporal Behavior of Real-World Person Re-Identification

arxiv: https://arxiv.org/abs/1808.05499

Alignedreid++: Dynamically Matching Local Information for Person Re-Identification

github: https://github.com/michuanhaohao/AlignedReID

Sparse Label Smoothing for Semi-supervised Person Re-Identification

arxiv: https://arxiv.org/abs/1809.04976

github: https://github.com/jpainam/SLS_ReID

In Defense of the Classification Loss for Person Re-Identification

intro: University of Science and Technology of China & Microsoft Research Asia

arxiv: https://arxiv.org/abs/1809.05864

FD-GAN: Pose-guided Feature Distilling GAN for Robust Person Re-identification

intro: NIPS 2018

arxiv: https://arxiv.org/abs/1810.02936

github(Pytorch, official): https://github.com/yxgeee/FD-GAN

Image-to-Video Person Re-Identification by Reusing Cross-modal Embeddings

arxiv: https://arxiv.org/abs/1810.03989

Attention Driven Person Re-identification

intro: Pattern Recognition (PR)

arxiv: https://arxiv.org/abs/1810.05866

A Coarse-to-fine Pyramidal Model for Person Re-identification via Multi-Loss Dynamic Training

intro: YouTu Lab, Tencent

arxiv: https://arxiv.org/abs/1810.12193

M2M-GAN: Many-to-Many Generative Adversarial Transfer Learning for Person Re-Identification

arxiv: https://arxiv.org/abs/1811.03768

Batch Feature Erasing for Person Re-identification and Beyond

arxiv: https://arxiv.org/abs/1811.07130

github(official, Pytorch): https://github.com/daizuozhuo/batch-feature-erasing-network

Re-Identification with Consistent Attentive Siamese Networks

arxiv: https://arxiv.org/abs/1811.07487

One Shot Domain Adaptation for Person Re-Identification

arxiv: https://arxiv.org/abs/1811.10144

Parameter-Free Spatial Attention Network for Person Re-Identification

arxiv: https://arxiv.org/abs/1811.12150

Spectral Feature Transformation for Person Re-identification

intro: University of Chinese Academy of Sciences & TuSimple

arxiv: https://arxiv.org/abs/1811.11405

Identity Preserving Generative Adversarial Network for Cross-Domain Person Re-identification

arxiv: https://arxiv.org/abs/1811.11510

Dissecting Person Re-identification from the Viewpoint of Viewpoint

arxiv: https://arxiv.org/abs/1812.02162

Fast and Accurate Person Re-Identification with RMNet

intro: IOTG Computer Vision (ICV), Intel

arxiv: https://arxiv.org/abs/1812.02465

Spatial-Temporal Person Re-identification

intro: AAAI 2019

intro: Sun Yat-sen University

arxiv: https://arxiv.org/abs/1812.03282

github: https://github.com/Wanggcong/Spatial-Temporal-Re-identification

Omni-directional Feature Learning for Person Re-identification

intro: Tongji University

keywords: OIM loss

arxiv: https://arxiv.org/abs/1812.05319

Learning Incremental Triplet Margin for Person Re-identification

intro: AAAI 2019 spotlight

intro: Hikvision Research Institute

arxiv: https://arxiv.org/abs/1812.06576

Densely Semantically Aligned Person Re-Identification

intro: USTC & MSRA

arxiv: https://arxiv.org/abs/1812.08967

EANet: Enhancing Alignment for Cross-Domain Person Re-identification

intro: CRISE & CASIA & Horizon Robotics

arxiv: https://arxiv.org/abs/1812.11369

github(official, Pytorch): https://github.com/huanghoujing/EANet

blog: https://zhuanlan.zhihu.com/p/53660395

Backbone Can Not be Trained at Once: Rolling Back to Pre-trained Network for Person Re-Identification

intro: AAAI 2019

intro: Seoul National University & Samsung SDS

arxiv: https://arxiv.org/abs/1901.06140

Ensemble Feature for Person Re-Identification

keywords: EnsembleNet

arxiv: https://arxiv.org/abs/1901.05798

Adversarial Metric Attack for Person Re-identification

intro: University of Oxford & Johns Hopkins University

arxiv: https://arxiv.org/abs/1901.10650

Discovering Underlying Person Structure Pattern with Relative Local Distance for Person Re-identification

intro: SYSU

arxiv: https://arxiv.org/abs/1901.10100

github: https://github.com/Wanggcong/RLD_codes

Attributes-aided Part Detection and Refinement for Person Re-identification

arxiv: https://arxiv.org/abs/1902.10528

Bags of Tricks and A Strong Baseline for Deep Person Re-identification

arxiv: https://arxiv.org/abs/1903.07071

github: https://github.com/michuanhaohao/reid-strong-baseline

Auto-ReID: Searching for a Part-aware ConvNet for Person Re-Identification

keywords: NAS

arxiv: https://arxiv.org/abs/1903.09776

Perceive Where to Focus: Learning Visibility-aware Part-level Features for Partial Person Re-identification

intro: CVPR 2019

intro: Tsinghua University & Megvii Technology

keywords: Visibility-aware Part Model (VPM)

arxiv: https://arxiv.org/abs/1904.00537

Pedestrian re-identification based on Tree branch network with local and global learning

intro: ICME 2019 oral

arxiv: https://arxiv.org/abs/1904.00355

Invariance Matters: Exemplar Memory for Domain Adaptive Person Re-identification

intro: CVPR 2019

arxiv: https://arxiv.org/abs/1904.01990

github: https://github.com/zhunzhong07/ECN

Person Re-identification with Bias-controlled Adversarial Training

arxiv: https://arxiv.org/abs/1904.00244

Person Re-identification with Metric Learning using Privileged Information

intro: IEEE TIP

arxiv: https://arxiv.org/abs/1904.05005

Joint Discriminative and Generative Learning for Person Re-identification

intro: CVPR 2019 oral

intro: NVIDIA & University of Technology Sydney & Australian National University

arxiv: https://arxiv.org/abs/1904.07223

Person Search

Joint Detection and Identification Feature Learning for Person Search

intro: CVPR 2017 Spotlight

keywords: Online Instance Matching (OIM) loss function

homepage(dataset+code):http://www.ee.cuhk.edu.hk/~xgwang/PS/dataset.html

arxiv: https://arxiv.org/abs/1604.01850

paper: http://www.ee.cuhk.edu.hk/~xgwang/PS/paper.pdf

github(official. Caffe): https://github.com/ShuangLI59/person_search

Person Re-identification in the Wild

intro: CVPR 2017 spotlight

keywords: PRW dataset

project page: http://www.liangzheng.com.cn/Project/project_prw.html

arxiv: https://arxiv.org/abs/1604.02531

github: https://github.com/liangzheng06/PRW-baseline

youtube: https://www.youtube.com/watch?v=dbOGwBITJqo

IAN: The Individual Aggregation Network for Person Search

arxiv: https://arxiv.org/abs/1705.05552

Neural Person Search Machines

intro: ICCV 2017

arxiv: https://arxiv.org/abs/1707.06777

End-to-End Detection and Re-identification Integrated Net for Person Search

keywords: I-Net

arxiv: https://arxiv.org/abs/1804.00376

Person Search via A Mask-guided Two-stream CNN Model

intro: ECCV 2018

arxiv: https://arxiv.org/abs/1807.08107

Person Search by Multi-Scale Matching

intro: ECCV 2018

keywords: Cross-Level Semantic Alignment (CLSA)

arxiv: https://arxiv.org/abs/1807.08582

Learning Context Graph for Person Search

intro: CVPR 2019

intro: Shanghai Jiao Tong University & Tencent YouTu Lab & Inception Institute of Artificial Intelligence, UAE

arxiv: https://arxiv.org/abs/1904.01830

Pose/View for Re-ID

Pose Invariant Embedding for Deep Person Re-identification

keywords: pose invariant embedding (PIE), PoseBox fusion (PBF) CNN

arixv: https://arxiv.org/abs/1701.07732

Deeply-Learned Part-Aligned Representations for Person Re-Identification

intro: ICCV 2017

arxiv: https://arxiv.org/abs/1707.07256

github(official, Caffe): https://github.com/zlmzju/part_reid

Spindle Net: Person Re-identification with Human Body Region Guided Feature Decomposition and Fusion

intro: CVPR 2017

paper: http://openaccess.thecvf.com/content_cvpr_2017/papers/Zhao_Spindle_Net_Person_CVPR_2017_paper.pdf

github: https://github.com/yokattame/SpindleNet

Pose-driven Deep Convolutional Model for Person Re-identification

intro: ICCV 2017

arxiv: https://arxiv.org/abs/1709.08325

A Pose-Sensitive Embedding for Person Re-Identification with Expanded Cross Neighborhood Re-Ranking

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1711.10378

github(official): https://github.com/pse-ecn/pose-sensitive-embedding

Pose-Driven Deep Models for Person Re-Identification

intro: Masters thesis

arxiv: https://arxiv.org/abs/1803.08709

Pose Transferrable Person Re-Identification

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Liu_Pose_Transferrable_Person_CVPR_2018_paper.pdf

Person re-identification with fusion of hand-crafted and deep pose-based body region features

arxiv: https://arxiv.org/abs/1803.10630

GAN for Re-ID

Unlabeled Samples Generated by GAN Improve the Person Re-identification Baseline in vitro

intro: ICCV 2017

arxiv: https://arxiv.org/abs/1701.07717

github(official, Matlab): https://github.com/layumi/Person-reID_GAN

github: https://github.com/qiaoguan/Person-reid-GAN-pytorch

Person Transfer GAN to Bridge Domain Gap for Person Re-Identification

intro: CVPR 2018 spotlight

intro: PTGAN

arxiv: https://arxiv.org/abs/1711.08565

github: https://github.com/JoinWei-PKU/PTGAN

Pose-Normalized Image Generation for Person Re-identification

keywords: PN-GAN

arxiv: https://arxiv.org/abs/1712.02225

github: https://github.com/naiq/PN_GAN

Multi-pseudo Regularized Label for Generated Samples in Person Re-Identification

arxiv: https://arxiv.org/abs/1801.06742

Human Parsing for Re-ID

Human Semantic Parsing for Person Re-identification

intro: CVPR 2018. SPReID

arxiv: https://arxiv.org/abs/1804.00216

Improved Person Re-Identification Based on Saliency and Semantic Parsing with Deep Neural Network Models

keywords: Saliency-Semantic Parsing Re-Identification (SSP-ReID)

arxiv: https://arxiv.org/abs/1807.05618

Partial Person Re-ID

Partial Person Re-identification

intro: ICCV 2015

arxiv: https://www.cv-foundation.org/openaccess/content_iccv_2015/papers/Zheng_Partial_Person_Re-Identification_ICCV_2015_paper.pdf

Deep Spatial Feature Reconstruction for Partial Person Re-identification: Alignment-Free Approach

intro: CVPR 2018.

keywords: Market1501 rank1=83.58%

arxiv: https://arxiv.org/abs/1801.00881

Occluded Person Re-identification

intro: ICME 2018

arxiv: https://arxiv.org/abs/1804.02792

Partial Person Re-identification with Alignment and Hallucination

intro: Imperial College London

keywords: Partial Matching Net (PMN)

arxiv: https://arxiv.org/abs/1807.09162

SCPNet: Spatial-Channel Parallelism Network for Joint Holistic and Partial Person Re-Identification

intro: ACCV 2018

arxiv: https://arxiv.org/abs/1810.06996

STNReID : Deep Convolutional Networks with Pairwise Spatial Transformer Networks for Partial Person Re-identification

intro: Zhejiang University & Megvii Inc

arxiv: https://arxiv.org/abs/1903.07072

Foreground-aware Pyramid Reconstruction for Alignment-free Occluded Person Re-identification

arxiv: https://arxiv.org/abs/1904.04975

RGB-IR Re-ID

RGB-Infrared Cross-Modality Person Re-Identification

arxiv: Wu_RGB-Infrared_Cross-Modality_Person_ICCV_2017_paper.pdf

Depth-Based Re-ID

Reinforced Temporal Attention and Split-Rate Transfer for Depth-Based Person Re-Identification

intro: ECCV 2018

arxiv: Nikolaos_Karianakis_Reinforced_Temporal_Attention_ECCV_2018_paper.pdf

A Cross-Modal Distillation Network for Person Re-identification in RGB-Depth

arxiv: https://arxiv.org/abs/1810.11641

Low Resolution Re-ID

Multi-scale Learning for Low-resolution Person Re-identification

intro: ICCV 2015

arxiv: https://www.cv-foundation.org/openaccess/content_iccv_2015/papers/Li_Multi-Scale_Learning_for_ICCV_2015_paper.pdf

Cascaded SR-GAN for Scale-Adaptive Low Resolution Person Re-identification

intro: IJCAI 2018

arxiv: https://www.ijcai.org/proceedings/2018/0541.pdf

Deep Low-Resolution Person Re-Identification

intro: AAAI 2018

keywords: Super resolution and Identity joiNt learninG (SING)

paper: http://www.eecs.qmul.ac.uk/~xiatian/papers/JiaoEtAl_2018AAAI.pdf

Reinforcement Learning for Re-ID

Deep Reinforcement Learning Attention Selection for Person Re-Identification

Identity Alignment by Noisy Pixel Removal

intro: BMVC 2017

arxiv: https://arxiv.org/abs/1707.02785

paper: http://www.eecs.qmul.ac.uk/~sgg/papers/LanEtAl_2017BMVC.pdf

Attributes Prediction for Re-ID

Multi-Task Learning with Low Rank Attribute Embedding for Person Re-identification

intro: ICCV 2015

paper: http://legacydirs.umiacs.umd.edu/~fyang/papers/iccv15.pdf

Deep Attributes Driven Multi-Camera Person Re-identification

intro: ECCV 2016

arxiv: https://arxiv.org/abs/1605.03259

Improving Person Re-identification by Attribute and Identity Learning

arxiv: https://arxiv.org/abs/1703.07220

Person Re-identification by Deep Learning Attribute-Complementary Information

intro: CVPR 2017 workshop

paper: https://sci-hub.tw/10.1109/CVPRW.2017.186

CA3Net: Contextual-Attentional Attribute-Appearance Network for Person Re-Identification

arxiv: https://arxiv.org/abs/1811.07544

Video Person Re-Identification

Recurrent Convolutional Network for Video-based Person Re-Identification

intro: CVPR 2016

paper: McLaughlin_Recurrent_Convolutional_Network_CVPR_2016_paper.pdf

github: https://github.com/niallmcl/Recurrent-Convolutional-Video-ReID

Deep Recurrent Convolutional Networks for Video-based Person Re-identification: An End-to-End Approach

arxiv: https://arxiv.org/abs/1606.01609

Jointly Attentive Spatial-Temporal Pooling Networks for Video-based Person Re-Identification

intro: ICCV 2017

arxiv: https://arxiv.org/abs/1708.02286

Three-Stream Convolutional Networks for Video-based Person Re-Identification

arxiv: https://arxiv.org/abs/1712.01652

LVreID: Person Re-Identification with Long Sequence Videos

arxiv: https://arxiv.org/abs/1712.07286

Multi-shot Pedestrian Re-identification via Sequential Decision Making

intro: CVPR 2018. TuSimple

keywords: reinforcement learning

arxiv: https://arxiv.org/abs/1712.07257

github: https://github.com/TuSimple/rl-multishot-reid

LVreID: Person Re-Identification with Long Sequence Videos

arxiv: https://arxiv.org/abs/1712.07286

Diversity Regularized Spatiotemporal Attention for Video-based Person Re-identification

intro: CUHK-SenseTime & Argo AI

arxiv: https://arxiv.org/abs/1803.09882

Video Person Re-identification with Competitive Snippet-similarity Aggregation and Co-attentive Snippet Embedding

intro: CVPR 2018 Poster

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Chen_Video_Person_Re-Identification_CVPR_2018_paper.pdf

Exploit the Unknown Gradually: One-Shot Video-Based Person Re-Identification by Stepwise Learning

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Wu_Exploit_the_Unknown_CVPR_2018_paper.pdf

Revisiting Temporal Modeling for Video-based Person ReID

arxiv: https://arxiv.org/abs/1805.02104

github: https://github.com/jiyanggao/Video-Person-ReID

Video Person Re-identification by Temporal Residual Learning

arxiv: https://arxiv.org/abs/1802.07918

A Spatial and Temporal Features Mixture Model with Body Parts for Video-based Person Re-Identification

arxiv: https://arxiv.org/abs/1807.00975

Video-based Person Re-identification via 3D Convolutional Networks and Non-local Attention

intro: University of Science and Technology of China & University of Chinese Academy of Sciences

arxiv: https://arxiv.org/abs/1807.05073

Spatial-Temporal Synergic Residual Learning for Video Person Re-Identification

arxiv: https://arxiv.org/abs/1807.05799

Where-and-When to Look: Deep Siamese Attention Networks for Video-based Person Re-identification

intro: IEEE Transactions on Multimedia

arxiv: https://arxiv.org/abs/1808.01911

STA: Spatial-Temporal Attention for Large-Scale Video-based Person Re-Identification

intro: AAAI 2019

arxiv: https://arxiv.org/abs/1811.04129

Multi-scale 3D Convolution Network for Video Based Person Re-Identification

intro: AAAI 2019

arxiv: https://arxiv.org/abs/1811.07468

Deep Active Learning for Video-based Person Re-identification

arxiv: https://arxiv.org/abs/1812.05785

Spatial and Temporal Mutual Promotion for Video-based Person Re-identification

intro: AAAI 2019

arxiv: https://arxiv.org/abs/1812.10305

3D PersonVLAD: Learning Deep Global Representations for Video-based Person Re-identification

arxiv: https://arxiv.org/abs/1812.10222

SCAN: Self-and-Collaborative Attention Network for Video Person Re-identification

intro: TIP 2019

arxiv: https://arxiv.org/abs/1807.05688

GAN-based Pose-aware Regulation for Video-based Person Re-identification

intro: Heriot-Watt University & University of Edinburgh & Queen’s University Belfast & Anyvision

keywords: Weighted Fusion (WF) & Weighted-Pose Regulation (WPR)

arxiv: https://arxiv.org/abs/1903.11552

Convolutional Temporal Attention Model for Video-based Person Re-identification

intro: ICME 2019

arxiv: https://arxiv.org/abs/1904.04492

Re-ranking

Divide and Fuse: A Re-ranking Approach for Person Re-identification

intro: BMVC 2017

arxiv: https://arxiv.org/abs/1708.04169

Re-ranking Person Re-identification with k-reciprocal Encoding

intro: CVPR 2017

arxiv: https://arxiv.org/abs/1701.08398

github: https://github.com/zhunzhong07/person-re-ranking

A Pose-Sensitive Embedding for Person Re-Identification with Expanded Cross Neighborhood Re-Ranking

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1711.10378

github(official): https://github.com/pse-ecn/expanded-cross-neighborhood

Adaptive Re-ranking of Deep Feature for Person Re-identification

arxiv: https://arxiv.org/abs/1811.08561

Unsupervised Re-ID

Unsupervised Person Re-identification: Clustering and Fine-tuning

arxiv: https://arxiv.org/abs/1705.10444

github: https://github.com/hehefan/Unsupervised-Person-Re-identification-Clustering-and-Fine-tuning

Stepwise Metric Promotion for Unsupervised Video Person Re-identification

intro: ICCV 2017

paper: http://openaccess.thecvf.com/content_ICCV_2017/papers/Liu_Stepwise_Metric_Promotion_ICCV_2017_paper.pdf

github: https://github.com/lilithliu/StepwiseMetricPromotion-code

Dynamic Label Graph Matching for Unsupervised Video Re-Identification

intro: ICCV 2017

arxiv: https://arxiv.org/abs/1709.09297

github: https://github.com/mangye16/dgm_re-id

Unsupervised Cross-dataset Person Re-identification by Transfer Learning of Spatio-temporal Patterns

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1803.07293

github: https://github.com/ahangchen/TFusion

blog: https://zhuanlan.zhihu.com/p/34778414

Cross-dataset Person Re-Identification Using Similarity Preserved Generative Adversarial Networks

arxiv: https://arxiv.org/abs/1806.04533

Transferable Joint Attribute-Identity Deep Learning for Unsupervised Person Re-Identification

intro: CVPR 2018

arxiv: https://arxiv.org/abs/1803.09786

Adaptation and Re-Identification Network: An Unsupervised Deep Transfer Learning Approach to Person Re-Identification

intro: CVPR 2018 workshop. National Taiwan University & Umbo Computer Vision

keywords: adaptation and re-identification network (ARN)

arxiv: https://arxiv.org/abs/1804.09347

Domain Adaptation through Synthesis for Unsupervised Person Re-identification

arxiv: https://arxiv.org/abs/1804.10094

Deep Association Learning for Unsupervised Video Person Re-identification

intro: BMVC 2018

arxiv: https://arxiv.org/abs/1808.07301

Support Neighbor Loss for Person Re-Identification

intro: ACM Multimedia (ACM MM) 2018

arxiv: https://arxiv.org/abs/1808.06030

Unsupervised Person Re-identification by Deep Learning Tracklet Association

intro: ECCV 2018 Oral

arxiv: https://arxiv.org/abs/1809.02874

Unsupervised Tracklet Person Re-Identification

intro: TPAMI 2019

arxiv: https://arxiv.org/abs/1903.00535

github: https://github.com/liminxian/DukeMTMC-SI-Tracklet

Unsupervised Person Re-identification by Deep Asymmetric Metric Embedding

intro: TPAMI

keywords: DEep Clustering-based Asymmetric MEtric Learning (DECAMEL)

arxiv: https://arxiv.org/abs/1901.10177

github: https://github.com/KovenYu/DECAMEL

Unsupervised Person Re-identification by Soft Multilabel Learning

intro: CVPR 2019 oral

intro: Sun Yat-sen University & YouTu Lab & Queen Mary University of London

keywords: MAR (MultilAbel Reference learning), soft multilabel-guided hard negative mining

project page: https://kovenyu.com/publication/2019-cvpr-mar/

arxiv: https://arxiv.org/abs/1903.06325

github(official, Pytorch): https://github.com/KovenYu/MAR

A Novel Unsupervised Camera-aware Domain Adaptation Framework for Person Re-identification

arxiv: https://arxiv.org/abs/1904.03425

Weakly Supervised Person Re-identification

Weakly Supervised Person Re-Identification

intro: CVPR 2019

keywords: multi-instance multi-label learning (MIML), Cross-View MIML (CV-MIML)

arxiv: https://arxiv.org/abs/1904.03832

Weakly Supervised Person Re-identification: Cost-effective Learning with A New Benchmark

keywords: SYSU-30k

arxiv: https://arxiv.org/abs/1904.03845

Vehicle Re-ID

Learning Deep Neural Networks for Vehicle Re-ID with Visual-spatio-temporal Path Proposals

intro: ICCV 2017

arxiv: https://arxiv.org/abs/1708.03918

Viewpoint-Aware Attentive Multi-View Inference for Vehicle Re-Identification

intro: CVPR 2018

paper: http://openaccess.thecvf.com/content_cvpr_2018/papers/Zhou_Viewpoint-Aware_Attentive_Multi-View_CVPR_2018_paper.pdf

RAM: A Region-Aware Deep Model for Vehicle Re-Identification

intro: ICME 2018

arxiv: https://arxiv.org/abs/1806.09283

Vehicle Re-Identification in Context

intro: Pattern Recognition - 40th German Conference, (GCPR) 2018, Stuttgart

project page: https://qmul-vric.github.io/

arxiv: https://arxiv.org/abs/1809.09409

Vehicle Re-identification Using Quadruple Directional Deep Learning Features

arxiv: https://arxiv.org/abs/1811.05163

Coarse-to-fine: A RNN-based hierarchical attention model for vehicle re-identification

intro: ACCV 2018

arxiv: https://arxiv.org/abs/1812.04239

Vehicle Re-Identification: an Efficient Baseline Using Triplet Embedding

arxiv: https://arxiv.org/abs/1901.01015

A Two-Stream Siamese Neural Network for Vehicle Re-Identification by Using Non-Overlapping Cameras

intro: ICIP 2019

arxiv: https://arxiv.org/abs/1902.01496

CityFlow: A City-Scale Benchmark for Multi-Target Multi-Camera Vehicle Tracking and Re-Identification

intro: Accepted for oral presentation at CVPR 2019 with review ratings of 2 strong accepts and 1 accept (work done during an internship at NVIDIA)

arxiv: https://arxiv.org/abs/1903.09254

Vehicle Re-identification in Aerial Imagery: Dataset and Approach

intro: Northwestern Polytechnical University

arxiv: https://arxiv.org/abs/1904.01400

Deep Metric Learning

Deep Metric Learning for Person Re-Identification

intro: ICPR 2014

paper: http://www.cbsr.ia.ac.cn/users/zlei/papers/ICPR2014/Yi-ICPR-14.pdf

Deep Metric Learning for Practical Person Re-Identification

arxiv: https://arxiv.org/abs/1407.4979

Constrained Deep Metric Learning for Person Re-identification

arxiv: https://arxiv.org/abs/1511.07545

Embedding Deep Metric for Person Re-identication A Study Against Large Variations

intro: ECCV 2016

arxiv: https://arxiv.org/abs/1611.00137

DarkRank: Accelerating Deep Metric Learning via Cross Sample Similarities Transfer

intro: TuSimple

keywords: pedestrian re-identification

arxiv: https://arxiv.org/abs/1707.01220

Projects

Open-ReID: Open source person re-identification library in python

intro: Open-ReID is a lightweight library of person re-identification for research purpose. It aims to provide a uniform interface for different datasets, a full set of models and evaluation metrics, as well as examples to reproduce (near) state-of-the-art results.

project page: https://cysu.github.io/open-reid/

github(PyTorch): https://github.com/Cysu/open-reid

examples: https://cysu.github.io/open-reid/examples/training_id.html

benchmarks: https://cysu.github.io/open-reid/examples/benchmarks.html

caffe-PersonReID

intro: Person Re-Identification: Multi-Task Deep CNN with Triplet Loss

gtihub: https://github.com/agjayant/caffe-Person-ReID

Person_reID_baseline_pytorch

intro: Pytorch implement of Person re-identification baseline

arxiv: https://github.com/layumi/Person_reID_baseline_pytorch

deep-person-reid

intro: Pytorch implementation of deep person re-identification models.

github: https://github.com/KaiyangZhou/deep-person-reid

ReID_baseline

intro: Baseline model (with bottleneck) for person ReID (using softmax and triplet loss).

github: https://github.com/L1aoXingyu/reid_baseline

blog: https://zhuanlan.zhihu.com/p/40514536

gluon-reid

intro: A code gallery for person re-identification with mxnet-gluon, and I will reproduce many STOA algorithm.

github: https://github.com/xiaolai-sqlai/gluon-reid

Evaluation

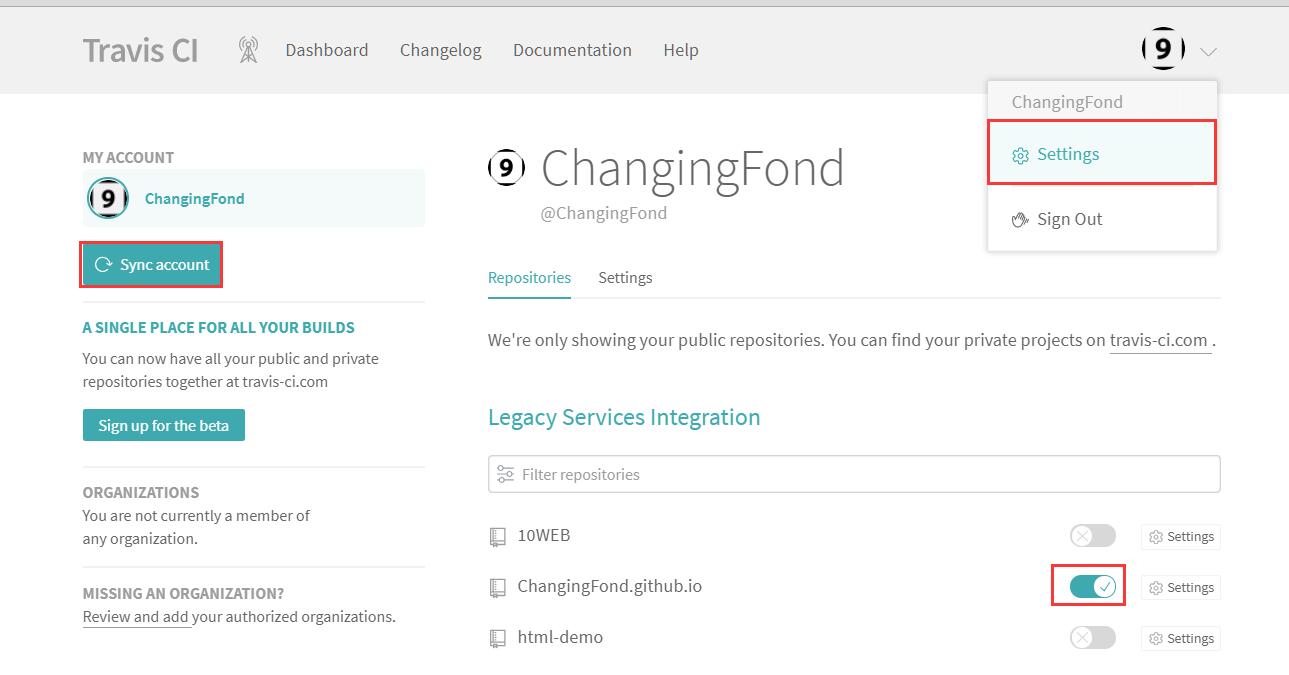

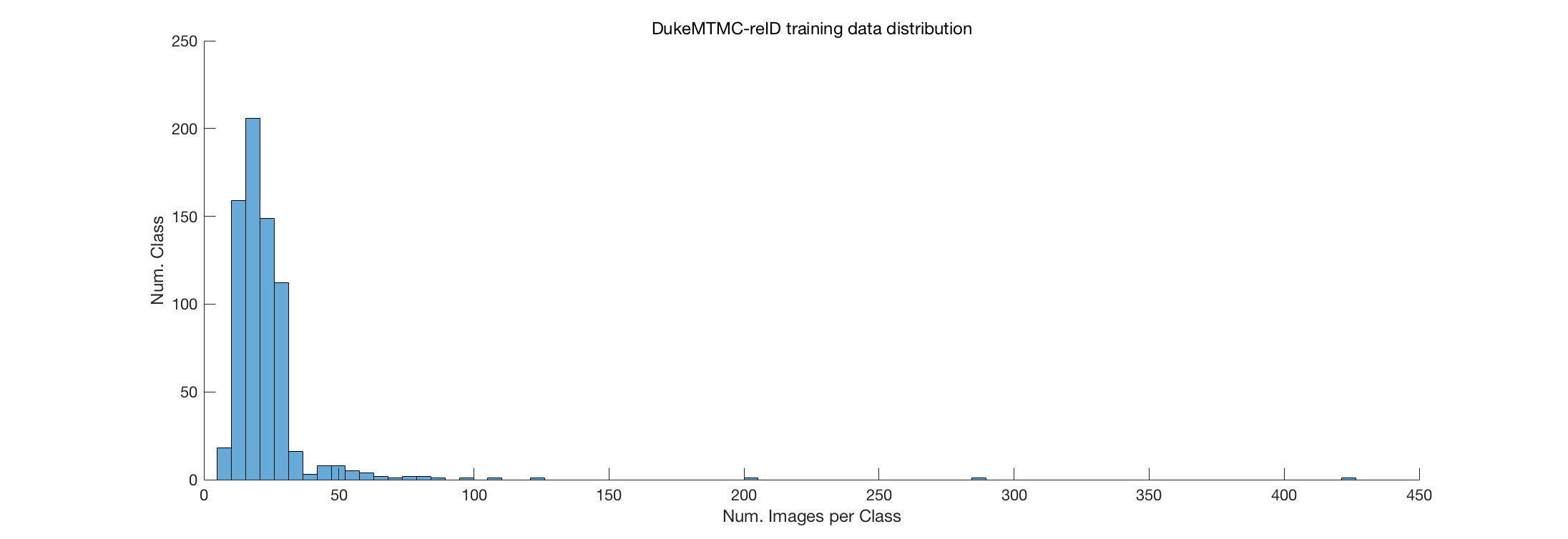

DukeMTMC-reID

intro: The Person re-ID Evaluation Code for DukeMTMC-reID Dataset (Including Dataset Download)

github: https://github.com/layumi/DukeMTMC-reID_evaluation

DukeMTMC-reID_baseline (Matlab)

github: https://github.com/layumi/DukeMTMC-reID_baseline

Code for IDE baseline on Market-1501

github: https://github.com/zhunzhong07/IDE-baseline-Market-1501

Datasets

Re-ID 数据集汇总

https://robustsystems.coe.neu.edu/sites/robustsystems.coe.neu.edu/files/systems/projectpages/reiddataset.html

Attribute相关数据集

RAP: http://rap.idealtest.org/

Attribute for Market-1501 and DukeMTMC_reID: https://vana77.github.io/

视频相关数据集

Mars: http://liangzheng.org/Project/project_mars.html

PRID2011: https://www.tugraz.at/institute/icg/research/team-bischof/lrs/downloads/

NLP相关数据集

自然语言搜图像: http://xiaotong.me/static/projects/person-search-language/dataset.html

自然语言搜行人所在视频: http://www.mi.t.u-tokyo.ac.jp/projects/person_search

Tutorials

1st Workshop on Target Re-Identification and Multi-Target Multi-Camera Tracking

https://reid-mct.github.io/

Target Re-Identification and Multi-Target Multi-Camera Tracking

http://openaccess.thecvf.com/CVPR2017_workshops/CVPR2017_W17.py

Person Re-Identification: Theory and Best Practice

http://www.micc.unifi.it/reid-tutorial/

Experts

Listed in No Particular Order

Resources

Re-id Resources

https://wangzwhu.github.io/home/re_id_resources.html

Zhuanzhi

http://www.zhuanzhi.ai/topic/2001183057160970

Zhihu

行人重识别: https://zhuanlan.zhihu.com/personReid

Person Re-id: https://zhuanlan.zhihu.com/re-id

Topci: https://www.zhihu.com/topic/20087378/hot

Blogs

行人重识别简介: https://www.jianshu.com/p/98cc04cca0ae

基于深度学习的Person Re-ID(综述): https://blog.csdn.net/linolzhang/article/details/71075756

行人再识别(行人重识别)【包含与行人检测的对比】: https://blog.csdn.net/liuqinglong110/article/details/41699861

行人重识别综述(Person Re-identification: Past, Present and Future): https://blog.csdn.net/auto1993/article/details/74091803

行人重识别: http://cweihang.cn/ml/reid/

]]>